Hello,

I'm using Filebeat and Logstash to analyze logs from Wazuh, the logs are json files where each line is a json string, I can read the log correctly and push it into Elasticsearch, through Logstash, and it correctly populate the fields, but in the discover tab on Kibana, the _source field shows the raw json.

Is there any way to decode the _source raw json and show the fields in the discover tab?

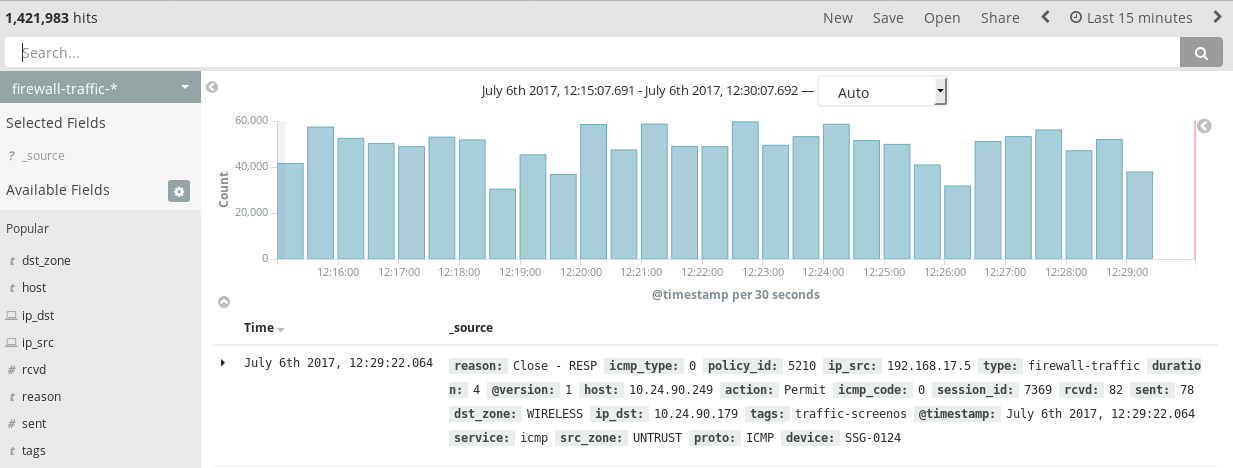

This is what I'm getting now:

This is the way I want the _source field to behave:

My filebeat configuration is:

filebeat:

prospectors:

- input_type: log

paths:

- "/var/ossec/logs/alerts/alerts.json"

document_type: wazuh-ossec

codec: json_lines

json.message_key: log

json.keys_under_root: true

json.overwrite_keys: true

output:

logstash:

hosts: ["10.114.243.54:5000"]

My logstash pipeline for this index is:

input {

beats {

host => "10.114.243.54"

port => 5000

codec => "json_lines"

type => "wazuh-ossec"

}

}

filter {

if [type] == "wazuh-ossec" {

geoip {

source => "srcip"

target => "GeoLocation"

fields => ["city_name", "continent_code", "country_code2", "country_name", "region_name", "location"]

}

date {

match => ["timestamp", "ISO8601"]

target => "@timestamp"

}

mutate {

remove_field => [ "timestamp", "beat", "fields", "input_type", "tags", "count", "@version", "log", "offset"]

}

}

}

output {

if [type] == "wazuh-ossec" {

elasticsearch {

hosts => ["localhost:9200"]

index => "wazuh-alerts-%{+YYYY.MM.dd.HH}"

codec => "json_lines"

document_type => "wazuh-ossec"

template => "/etc/logstash/wazuh-elastic5-template.json"

template_name => "wazuh"

template_overwrite => true

}

}

}

What am I missing?