I have latitude and longitude data in a Postgresql DB, now I need to move those data to Elasticsearch through Logstash pipeline. I have tried different methods but non of them did not work so far.

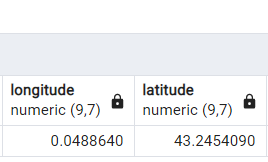

This is the sample record in Postgresql

I was trying to migrate those data from pg to elasticsearch using below logstash pipline

input {

jdbc {

type => "dvf"

jdbc_connection_string => "jdbc:postgresql://<IP>:<Port>/<db>"

jdbc_user => "<user-name>"

jdbc_password => "<password>"

jdbc_driver_library => "/usr/share/logstash/postgresql-42.4.0.jar"

jdbc_driver_class => "org.postgresql.Driver"

statement => "SELECT * FROM public.dvf"

jdbc_fetch_size => 10

jdbc_paging_enabled => true

}

}

filter {

mutate {

convert => {

"adresse_numero" => "string"

"adresse_code_voie" => "string"

"code_postal" => "string"

"code_departement" => "string"

"id_parcelle" => "string"

"nom_commune" => "string"

"latitude" => "string"

"longitude" => "string"

}

}

}

output {

#stdout { codec => rubydebug}

if [type] == "dvf" {

elasticsearch {

index => "search-dvf-data-%{+yyyy.MM.dd}"

hosts => ["https://es-host"]

ssl => true

ssl_certificate_verification => true

user => '<es-use>'

password => '<es-password>'

}

}

}

The issue is that I do not get the proper data in Elasticsearch.

here is what I get in ES,

In the above logstash pipeline filter I used to convert the latitude and longitude to string.

Hoping to fix this issue, I was trying to send the data type as float. but the result is the same.

How to convert geo coordinates from logstash filtering and properly store in Elasticsearch index?

Any help would be appreciated.