Gentlemen,

I've configured packetbeat on a machine with plenty resources (72GB RAM, 24 cores Intel Xeon X5670), and its listening on two 10gb interfaces, that combined are reaching about 1gbps during peak hours. On this same machine I'm running suricata. It and Packetbeat listening on the same interfaces. Suricata is currently using about 700% of CPU power and 5GB of RAM. Packetbeat tops at about 300% CPU and 4GB RAM.

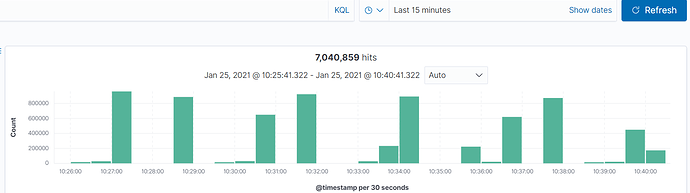

But I'm seeing some events acumulating and probably some packet loses of packetbeat, as can been seen bellow:

On the machine I can see that it hangs from time to time, by following the journal (the machine is a RH 7.9 fully patched), as seen bellow.

Jan 25 10:42:39 XXXXX packetbeat[4010]: 2021-01-25T10:42:39.135-0300 ERROR [pgsql] pgsql/parse.go:544 Pgsql invalid column_length=4294967295, buffer_length=62, i=3

Jan 25 10:45:17 XXXXX packetbeat[4010]: 2021-01-25T10:45:17.673-0300 ERROR [pgsql] pgsql/parse.go:544 Pgsql invalid column_length=4294967295, buffer_length=172, i=8

For what I could see that vast majority of events come when the journal "stops". The packets never stop comming on the interfaces.

I've already tried stopping suricata, disabling flows, changing flow period (using the default 30s), changing the internal queue size, etc. But I'm always ending with this problem and a max of 10k events per second on this machine.

My ES cluster is very lightlly loaded (we have other sources arriving on it, and I've seen it reach about 70k primary events per second), I don't have any aditional pipelines running on these events. We have 3 master nodes, 4 ingest nodes and 10 data nodes.

Can you suggest what the problem may be or configuration that I can try? Thanks!

My packetbeat configuration is this:

packetbeat.flows:

enabled: true

period: -1s

timeout: 30s

packetbeat.interfaces.device: any

packetbeat.interfaces.type: af_packet

packetbeat.interfaces.buffer_size_mb: 100

packetbeat.interfaces.with_vlan: true

max_procs: 256

packetbeat.ignore_outgoing: true

packetbeat.interfaces.auto_promisc_mode: true

packetbeat.interfaces.snaplen: 1514

packetbeat.protocols:

- enabled: false

type: icmp

- ports:

- 5672

type: amqp

- ports:

- 9042

type: cassandra

- ports:

- 67

- 68

type: dhcpv4

- ports:

- 53

type: dns

- ports:

- 80

- 8080

- 8000

- 5000

- 8002

- 804

type: http

- type: memcache

- ports:

- 3306

- 3307

type: mysql

- ports:

- 5432

type: pgsql

- ports:

- 6379

type: redis

- ports:

- 9090

type: thrift

- ports:

- 27017

type: mongodb

- ports:

- 2049

type: nfs

- ports:

- 443

- 993

- 995

- 5223

- 8443

- 8883

- 9243

type: tls

processors:

setup.ilm.overwrite: false

setup.kibana:

host: https://XXXXXXXXX:443

ssl.verification_mode: certificate

setup.template.settings:

index.number_of_shards: 5

ssl.certificate_authorities: /etc/packetbeat/certs/XXXXXXXXX.pem

output:

elasticsearch:

bulk_max_size: 200

compression_level: 0

hosts:

- https://XXXXXXXXX:9200

- https://XXXXXXXXX:9200

- https://XXXXXXXXX:9200

- https://XXXXXXXXX:9200

loadbalance: true

password: XXXXXXXXX

ssl.verification_mode: certificate

username: XXXXXXXXX

worker: 1

logging.level: "error"

logging:

files:

rotateeverybytes: 10485760