I'm using Logstash to ingest logs stored on AWS S3. My configuration file looks like this:

input {

s3 {

"access_key_id" => "REDACTED"

"secret_access_key" => "REDACTED"

"bucket" => "REDACTED"

"exclude_pattern" => "^(?!lin-gitlab-gl-stage-\d+-\d+-\d+-\d+\/gitlab-workhorse\/(current|\S+.s))"

"gzip_pattern" => "\.s?$"

"region" => "ap-southeast-1"

"id" => "gitlab-workhorse"

"sincedb_path" => "/var/lib/logstash/plugins/inputs/s3/sincedb_gitlab_workhorse"

"type" => "workhorse"

}

s3 {

"access_key_id" => "REDACTED"

"secret_access_key" => "REDACTED"

"bucket" => "REDACTED"

"exclude_pattern" => "^(?!lin-gitlab-gl-stage-\d+-\d+-\d+-\d+\/gitlab-rails\/api_json.log(.\d+.gz)?)"

"gzip_pattern" => "\.gz?$"

"region" => "ap-southeast-1"

"id" => "gitlab-rails"

"sincedb_path" => "/var/lib/logstash/plugins/inputs/s3/sincedb_gitlab_rails"

"type" => "api"

}

}

filter {

json {

source => "message"

}

if [remote_ip] == "127.0.0.1" {

drop {}

}

}

output {

amazon_es {

hosts => ["REDACTED"]

region => "us-east-1"

index => "stage-gitlab-%{type}-%{+YYYY.MM.dd}"

}

}

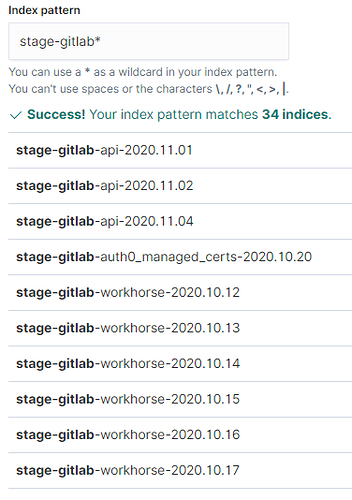

So, given that I'm explicitly using type as part of the index name, I would expect this configuration to only create indices with names that start stage-gitlab-api and stage-gitlab-workhorse.

However, I'm seeing names where the "type" portion of the index name is "w", "ssa", "ss", "slo", "seccft" and so on, e.g. stage-gitlab-seccft-2020.11.04.

Can anyone explain why this is happening, please?