Elasticsearch version: 6.2.3

JVM version:

openjdk version "1.8.0_162"

OpenJDK Runtime Environment (build 1.8.0_162-8u162-b12-0ubuntu0.16.04.2-b12)

OpenJDK 64-Bit Server VM (build 25.162-b12, mixed mode

OS version

Linux elasticsearch-6-2-3-client-eu-0 4.13.0-1012-gcp #16-Ubuntu SMP Thu Mar 15 12:00:42 UTC 2018 x86_64 x86_64 x86_64 GNU/Linux

The Instances are running within Google Cloud. Client and data node instances are both on n1-standard-16s (16 cpu and 60GB memory).

I'm trying to optimise the indexing rate, which is currently 25k/sec but this needs to be much faster. It seems like one of the possible issues is that one data node is taking on most of the work when bulk importing.

I bulk import in 10MB pieces and I have tried lowering that all the way to 3MB with no changes. A new connection is created to each client and I round robin between them when importing. I have also tried with a single connection rather than creating a new for each client with no difference.

The setup that I have is as follows:

Master Nodes: Three

Client Nodes: Two

Data Nodes: Four

The bulk thread pool size is set to 17 on the client and data nodes.

node_name name active queue rejected

elasticsearch-6-2-3-data-eu-1 bulk 1 0 0

elasticsearch-6-2-3-client-eu-1 bulk 0 0 0

elasticsearch-6-2-3-master-eu-2 bulk 0 0 0

elasticsearch-6-2-3-client-eu-0 bulk 0 0 0

elasticsearch-6-2-3-master-eu-0 bulk 0 0 0

elasticsearch-6-2-3-data-eu-3 bulk 17 15 0

elasticsearch-6-2-3-master-eu-1 bulk 0 0 0

elasticsearch-6-2-3-data-eu-2 bulk 1 0 0

elasticsearch-6-2-3-data-eu-0 bulk 0 0 0

What is the size of your documents? The number of events per second is generally not a very good measurement of performance as the document size has a significant impact on the amount of work needed to be done per document.

How are you indexing the documents? Do requests go to the client nodes or directly to the data nodes? How many concurrent indexing threads/processes do you use? How many indices and shards are you actively indexing into? Are these evenly distributed across the data nodes?

@Christian_Dahlqvist

The size of the documents range pretty wide, some can be less than 1KB others can go to 15KB.

The requests go to the client nodes. I run 15 concurrent processes each of them reusing the same http connections to the client. I make a new http connection to each ES client and round robin between them when making a request. For this instance I have given I am indexing into one index. And the indexes are seem to be evenly distributed across the data nodes.

Have you determined what is limiting performance? Is the above monitoring screenshot from when you are indexing? What does CPU, disk I/O and iowait look like? Are you using nested documents and/or parent/child? Are you specifying the document IDs from the application or allowing Elasticsearch to set them?

@Christian_Dahlqvist

Well I'm pretty certain it is to do with the data nodes being unbalanced during a bulk import. I would assume that the load should be distributed across all data nodes rather than giving one data node most of the load?

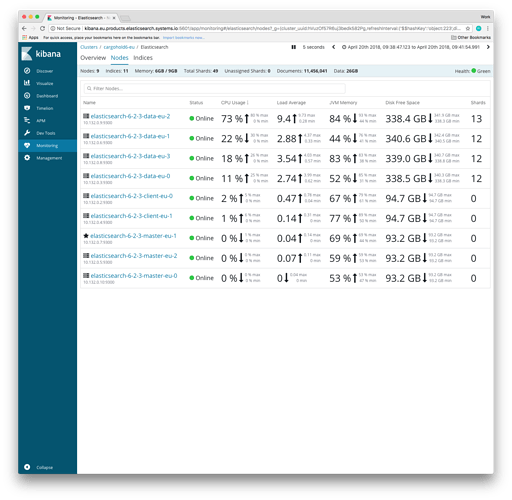

The screenshot above is during indexing time yes. CPU is fine aside from one data node being given most of the work, I just imported again and got this as a result:

disk I/O is also fine even on the data node that is being given the most work:

iowait also looks fine, the data node being given the most work obviously has a higher iowait time but by very little, it maxed out at 0.40.

Documents aren't nested and I'm not using parent/child either.

Document IDs are being set by Elasticsearch.

How many shards and replicas do the index you are indexing into have? Are you by any chance using routing?

@Christian_Dahlqvist

I leave the shard count to the default so 5 and I set the replicas to 0 before indexing but as soon as i'm done I set to 1. I'm not using routing, no.

I also set the refresh interval to -1 before indexing and then manually refresh once indexing is done.

5 shards across 4 data nodes means one of the nodes will have twice the load of the others. Do you by any chance have 2 shards on the node with higher load?

I just dropped down to 2 data nodes using 5 shards and the same problem occurs. One data node is being given most of the load. Any idea why one data node is being given most of the load and it not being balanced?

Can you try using 4 primary shards instead so that an even distribution is possible?

With or without replicas it should allow an even distribution across the 4 data nodes.