dyl

April 5, 2018, 12:58pm

1

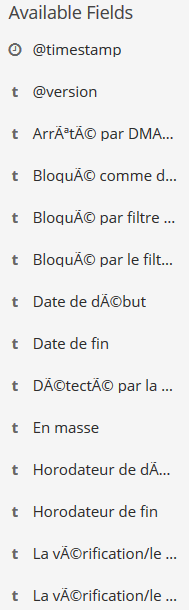

Hey community, I just import CSV into ElasticSearch but I would like to know if I had possibility to rename these columns :

"Bloqué par le filtrage par réputation" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"Date de début" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

Thanks for answers !

dadoonet

April 5, 2018, 1:19pm

2

You need to reindex and make sure you are using UTF8 as for character encoding.

dyl

April 5, 2018, 1:23pm

3

The problem is that it's the CSV that I'm importing that produces these weird characters ... And I can not change the export encoding of my CSV

dadoonet

April 5, 2018, 1:37pm

4

What do you use to import the CSV ?

dyl

April 5, 2018, 1:49pm

5

It is a security CSV export from IronPort; a software, and i can't change anything

dadoonet

April 5, 2018, 2:57pm

6

I did not mean exporting to CSV but how did you import the CSV file into elasticsearch?

dyl

April 5, 2018, 9:34pm

7

sorry I had misread,

using logstash and filebeat:

I put filebeat listening on the directory where I send my CSV.

I get input {beats => "5044" } in my logstash pipeline

dadoonet

April 6, 2018, 7:38am

8

I think you need to configure an input Codec: https://www.elastic.co/guide/en/logstash/6.2/plugins-codecs-line.html

And define the right charset.

I moved the question to #logstash as I feel it's where everything should be solved.

system

May 4, 2018, 7:38am

9

This topic was automatically closed 28 days after the last reply. New replies are no longer allowed.