Hello,

I use a graylog server, with elasticsearch and kibana installed on it. I recently used curator to send indexes to aws S3, then to delete them, in order to save space on my server.

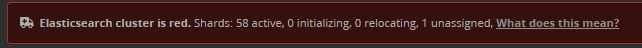

All of this works fine, but I have an error message that now appears on graylog and kibana, as it does not find indexes that are deleted

Do you know how to fix this problem? Thank you

A cluster in the red state means that one or more primary shards is considered to be missing. That's what the "unassigned" means. Curator typically cannot cause a red state without something else having gone wrong—it uses the Elasticsearch APIs to delete, snapshot, allocate, etc., and those behaviors do not of themselves cause a shard to appear unassigned. This does not mean that I am categorically stating that there is no correlation between you having run Curator and having this state. Just because it shouldn't happen doesn't mean it didn't happen.

To correct a red state, you must remove the index with the unassigned shard. You can run:

GET /_cat/indices?v

and it will show you where your indices are, and what state they are in:

health status index uuid pri rep docs.count docs.deleted store.size pri.store.size

green open .monitoring-beats-7-2021.04.14 kqoWIZ84TVKXwuFBihHvNQ 1 1 96900 0 124.1mb 62mb

...

Note that the first column shows the index state. You need only find the one that is red, and then delete that index name:

DELETE /some-index-name

NOTE: Don't just jump in and delete it if the red index is a system index, but this seems highly unlikely as you'd have many other problems than a shard failed message. A system index is one that starts with a period, like .security or the like. A .monitoring-* index is not a system index, but is merely monitoring data and are generally safe to delete.

Hello @theuntergeek ,

It worked for me, thanks

![]()