i later corrected and finalised beatconfig as below

###################### Filebeat Configuration Example #########################

# This file is an example configuration file highlighting only the most common

# options. The filebeat.reference.yml file from the same directory contains all the

# supported options with more comments. You can use it as a reference.

#

# You can find the full configuration reference here:

# https://www.elastic.co/guide/en/beats/filebeat/index.html

# For more available modules and options, please see the filebeat.reference.yml sample

# configuration file.

#=========================== Filebeat prospectors =============================

filebeat.prospectors:

Each - is a prospector. Most options can be set at the prospector level, so

you can use different prospectors for various configurations.

Below are the prospector specific configurations.

#============================= Filebeat modules ===============================

#filebeat.config.modules:

Glob pattern for configuration loading

#path: ${path.config}/modules.d/*.yml

Set to true to enable config reloading

#reload.enabled: false

Period on which files under path should be checked for changes

#reload.period: 10s

#==================== Elasticsearch template setting ==========================

setup.template.settings:

#index.number_of_shards: 3

#index.codec: best_compression

#_source.enabled: false

#================================ General =====================================

The name of the shipper that publishes the network data. It can be used to group

all the transactions sent by a single shipper in the web interface.

#name:

The tags of the shipper are included in their own field with each

transaction published.

#tags: ["service-X", "web-tier"]

Optional fields that you can specify to add additional information to the

output.

#fields:

env: staging

#============================== Dashboards =====================================

These settings control loading the sample dashboards to the Kibana index. Loading

the dashboards is disabled by default and can be enabled either by setting the

options here, or by using the -setup CLI flag or the setup command.

#setup.dashboards.enabled: false

The URL from where to download the dashboards archive. By default this URL

has a value which is computed based on the Beat name and version. For released

versions, this URL points to the dashboard archive on the artifacts.elastic.co

website.

#setup.dashboards.url:

#============================== Kibana =====================================

Starting with Beats version 6.0.0, the dashboards are loaded via the Kibana API.

This requires a Kibana endpoint configuration.

#setup.kibana:

Kibana Host

Scheme and port can be left out and will be set to the default (http and 5601)

In case you specify and additional path, the scheme is required: http://localhost:5601/path

IPv6 addresses should always be defined as: https://[2001:db8::1]:5601

#host: "localhost:5601"

#============================= Elastic Cloud ==================================

These settings simplify using filebeat with the Elastic Cloud (https://cloud.elastic.co/).

The cloud.id setting overwrites the output.elasticsearch.hosts and

setup.kibana.host options.

You can find the cloud.id in the Elastic Cloud web UI.

#cloud.id:

The cloud.auth setting overwrites the output.elasticsearch.username and

output.elasticsearch.password settings. The format is <user>:<pass>.

#cloud.auth:

#================================ Outputs =====================================

Configure what output to use when sending the data collected by the beat.

#-------------------------- Elasticsearch output ------------------------------

#output.elasticsearch:

Array of hosts to connect to.

#hosts: ["localhost:9200"]

Optional protocol and basic auth credentials.

#protocol: "https"

#username: "elastic"

#password: "changeme"

#----------------------------- Logstash output --------------------------------

output.logstash:

The Logstash hosts

hosts: ["10.52.33.97:63354"]

Optional SSL. By default is off.

List of root certificates for HTTPS server verifications

#ssl.certificate_authorities: ["/etc/pki/root/ca.pem"]

Certificate for SSL client authentication

#ssl.certificate: "/etc/pki/client/cert.pem"

Client Certificate Key

#ssl.key: "/etc/pki/client/cert.key"

#================================ Logging =====================================

Sets log level. The default log level is info.

Available log levels are: critical, error, warning, info, debug

#logging.level: debug

At debug level, you can selectively enable logging only for some components.

To enable all selectors use ["*"]. Examples of other selectors are "beat",

"publish", "service".

#logging.selectors: ["*"]

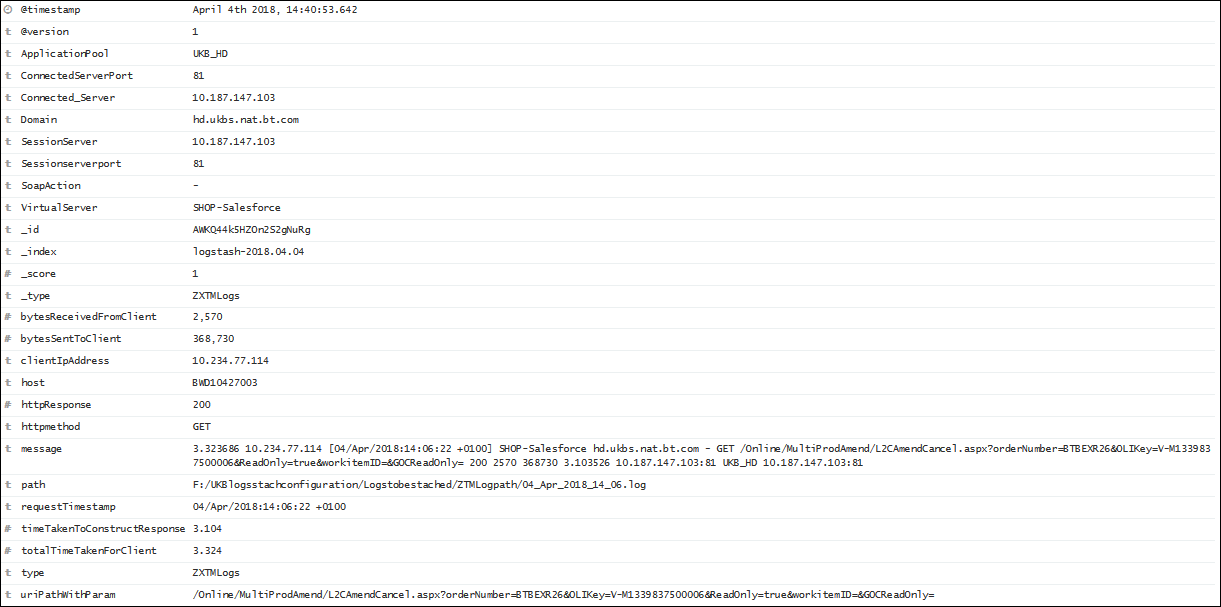

now the data is back on kibana, but still its not getting parsed as per my expectation