I am using elastic 6.4, and using /_xpack/sql to output some columns. Due to use order by command, have to average number columns that output just have 1 decimal place.

In my case, I use my local vbox with ubuntu 18.04 and elasticsearch sql can output many decimal place, but I using the redhat machine that it output the average column like 0.0.

Can someone help me to get the correct settings?

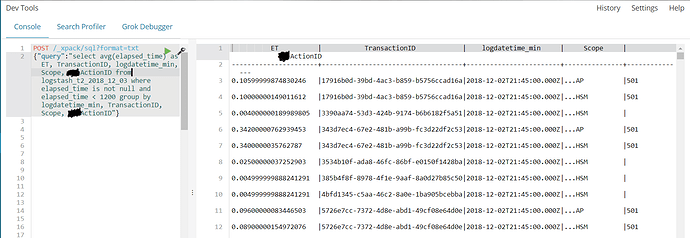

vbox picture like below:

redhat machine1 like below:

update:

I have two redhat machine, and one works normally.

redhat machine2 like below:

Hello. Does my environment of elasticsearch set wrong?

Update:

Using es query and output result didn't have decimal

And another redhat machine has same problem in this morning.

Hi @zxc654951,

I see that every time you get 0.0 you are using index logstash_t2_2018_11_23. A fair test between two different machines would be the same query on the same index. But, in your case, you perform the query on two different indices. To me, your tests don't seem relevant for the issue.

Please, test the same query on the same index on both machines (the one showing 0.0 and the one showing "good" numbers).

Sorry, I can't got the logstash_t2_2018_11_23 or logstash_t2_2018_11_23 because I delete those indices. Therefor, I got "good" numbers with delete all indices and re-logstash data. It query normally now, but I consider deleted that is not a good solution.

Finally, I don't know what can solve this question.

My assumption is that the index you tested on didn't have good data in it. And, thus, you got the 0.0 everywhere. Next time you notice this issue, please test the same query on the same index on multiple machines.

Thanks.

Two machine load difference data source but the server's settings are same. Queryed data maybe not like you want.

Some problem is the same index in same machine which will query different result with time pass and indices grow. If I get it again then I will update the content. Thanks.

I encounter the problem again. My machine and ELK's conf, yml... are not change.

You can see my the results of sql query and discover of kibana below:

When I change the query index, the results are different for elapsed_time that one is decimal and another is 0.0. But in the discover is show the elapsed_time is good. Because this query problem, I can't average the elapsed_time. Maybe my English is poor to show my problem, but I hope some people can tell me how to solve this problem. Thanks a lot.

What is the result of these two requests?

GET logstash_t2_2018_12_04/_mapping/*/field/elapsed_time

and

GET logstash_t2_2018_12_03/_mapping/*/field/elapsed_time

I try two request and result as follow:

{ "logstash_t2_2018_12_04": { "mappings": { "doc": { "elapsed_time": { "full_name": "elapsed_time", "mapping": { "elapsed_time": { "type": "long" } } } } } } }

{ "logstash_t2_2018_12_03": { "mappings": { "doc": { "elapsed_time": { "full_name": "elapsed_time", "mapping": { "elapsed_time": { "type": "float" } } } } } } }

It's seem data type be changed but I don't change any setting. How can I do next step?

The data type didn't change. If this is data coming from Logstash and you don't have an index template in which you specifically say that elapsed_time should be float then Elasticsearch will guess the type of the field from the first document that comes to the index. If it happens that the first value coming in doesn't have decimals, then Elasticsearch will not make that field a float.

So, I highly suggest defining an index template for these indices so that when a new index gets created every day, it will use that template for its mapping. These are index templates: https://www.elastic.co/guide/en/elasticsearch/reference/current/indices-templates.html.

Also, I strongly suggest reading this blog post, as it gives you step-by-step instructions (and explanations) on how to define your own template and why: https://www.elastic.co/blog/logstash_lesson_elasticsearch_mapping

It works normally after I set index template, thanks.